<English cross post with my DCP blog>

You’re ready to enter the great world of DCIM software and jump right into the hype ?

Do you actually know what you need from a DCIM solution ? What are your functional requirements ?

So before you jump in, let’s take a step back and look at DataCenter Information Management from a 40,000 feet level: the datacenter facility information architecture.

Let’s start with ‘data’;

Data is all around us in the datacenter environment. It’s on the post-it notes on your desk, the dozen Excel files you manage to report and collect measurements and the collection of electrical and architectural drawings sitting in your desk drawer.

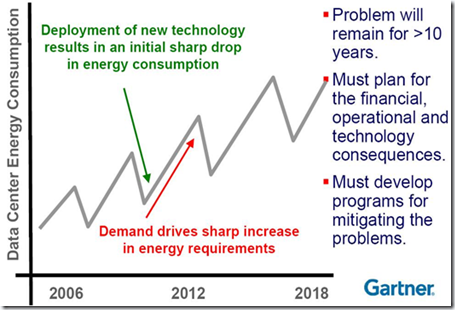

A modern day datacenter is filled with sensors connected to control systems. Some of the equipment is connected to central SCADA or BMS systems, some handle all the process control locally at the equipment. HVAC, electrical distribution and conversion systems, access control and CCTV; they all generate data streams. With the growth of datacenters in square meters and megawatts, the amount of data grows too.

The introduction of PUE and focus on energy efficiency have shown us the importance of data and especially data analysis. For most of us this has introduced even more data points, but enabled us to do better analysis of our datacenter’s performance. So; more data has enabled more efficiency and a better return on investment. Some of us could even say they entered the BigData era with datacenter facility data.

DCIM can play a role in the analysis of all this data, but it’s important to know where your data is first. Where is the current data stored ? What are the data streams within your datacenter ? What data is actually available and what data actually matters to your operation ? It’s a false assumption that all the data needs to be pulled in to a DCIM solution; that depends on your processes and your information requirements.

Process

Every datacenter has its collection of structured activities or tasks that produce a specific service or product for our internal or external customer. These are the primary processes focusing on the services your datacenter needs to provide. Examples are operations processes like Work Orders or Capacity Management.

These primary processes are assisted by supporting processes that make the core (primary) processes work and optimize them. Examples are Quality, Accounting or Recruitment processes.

Indentifying the primary and supporting processes in your datacenter enables you to optimize them by executing them in a consisted way very time and checking the output.

If you run an ISO9001 certified shop, you will definitely know what I’m talking about.

To run the processes we need information. Information is used in our processes to make decisions. The needed information can be collected and supplied by an individual or an (IT) system.

When data is collected it’s not yet information. Applying knowledge creates information from data. IT systems can assist us to create information from data, with built-in or collected knowledge.

Indentifying your datacenter processes also enables you to get a grip on the information that is needed to move the processes forward. Is this information available ? What is the quality of the information and process output ? How much time does it take to make it available ? Can this be optimized ?

DCIM solutions can assist you in creating information from data and provide information and process optimization. Most of the DCIM solutions depend on built-in knowledge on how datacenters work and operate, to facilitate this and optimize processes.

DCIM is only one of the applications used to support and optimize our datacenter processes. To support the full stack of processes we need a whole range of applications and tools. These applications can be everything from Planning to Asset Management to Customer Relationship Management (CRM) to SCADA/BMS tools.

Most of us already have some type of SCADA or BMS system running in our datacenter to control and monitor our facility infrastructure. This SCADA or BMS system will handle typical facility protocols like Modbus, BACnet or LonWorks. The programming logic used in most SCADA/BMS systems is not something found in typical DCIM solutions.

With the growing amount of sensors and their data, the SCADA/BMS system must be able to handle hundreds of measurements per minute. It must store, analyze and be able to react-on the provided data to control things like remote valves and pumps. This functionality is also typically not found in DCIM solutions. (So SCADA/BMS does not equal (!=) DCIM.)

Anyone running a production datacenter will already have a collection of applications to support their datacenter processes. You may have a ticketing system, a CRM application, MS Office application, etc.. Some times DCIM is perceived as the only tool you need to manage your datacenter but it will definitely not replace all your current tools and applications.

Model

Now that you have indentified your data, processes and current applications it’s time to focus on what you need DCIM for anyway; define your functional requirements.

One way of assisting you in this definition is creating your own datacenter facility information model.

IT architects are trained in creating information models, so if you have any walking around ask them to assist you.

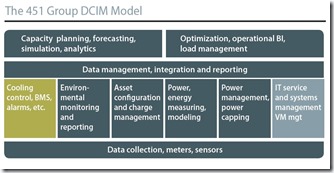

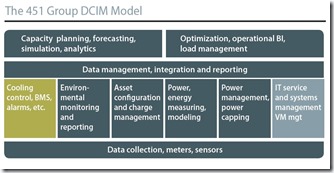

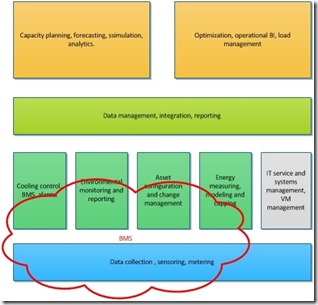

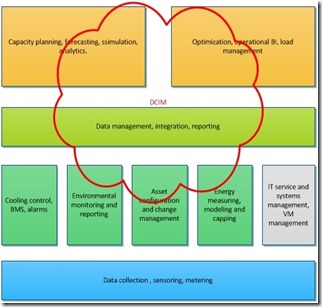

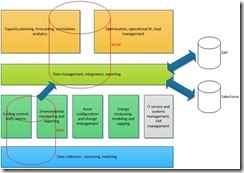

Example of a model would be the one that the 451 Group created for their DCIM analysis. This is featured in the DCK Guide to Data Center Infrastructure Management (DCIM) (The model doesn’t cover the full scope for every organization, but it helps me to explain what I mean in this blog…)

The model displays functionality fields what would typically exist when operating a datacenter.

You can use a model like this to identify what functionality you currently don’t have (from a process and application perspective) and what can be optimized.

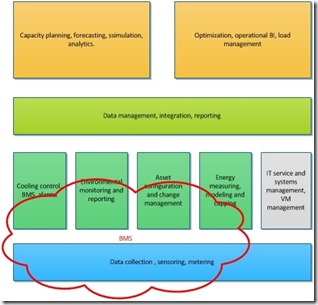

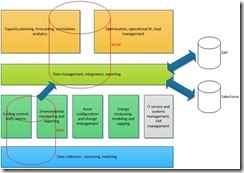

It also enables you to plot your current tools on the model and indentify gaps and overlap. In this example I have plotted one of my SCADA/BMS systems on the (slightly modified) model:

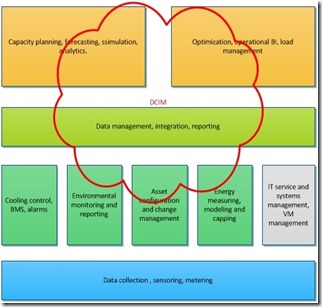

I have also plotted the DCIM need for that project:

Using models like this will give you a sense of what you actually expect from a DCIM solution and assist in creating your functional requirements for DCIM tool selection (RFP/RFI).

Integration is key

Modern day IT information management consists of collections of applications and datastores, connected for optimal information exchange. IT information and business architects have already tried the ‘one application to rule them all’ approach before and failed. Because creating information islands also doesn’t work, we need to enable applications and information stores to talk to each other.

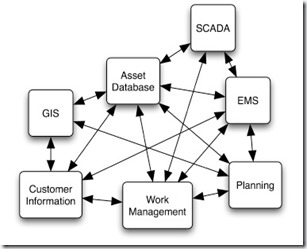

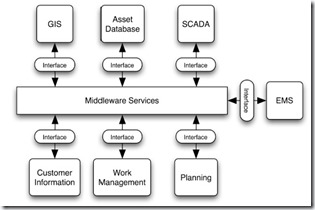

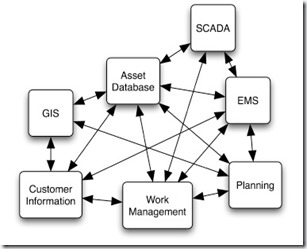

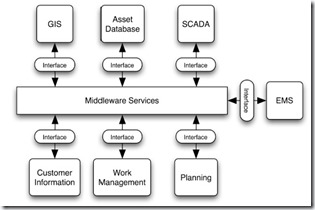

You may have some customer information about the usage of datacenter racks in a CRM system like Salesforce. You may already have some asset information of your CRAC’s in a asset management system or maybe an procurement system. This is all interesting and relevant information for your ‘datacenter view on the world’. Connecting all the systems and datastores could get really ugly, time consuming and error-prone:

IT architects have already struggled with this some time ago when integrating general business applications. This has started things like Service-oriented architecture (SOA) , enterprise service bus (ESB) and application programming interface (API). All fancy words (and IT loves their 3 letter acronyms) for IT architectural models, to be enable applications to talk to each other.

The DCIM solution you select, needs to be able to integrate in to your current world of IT applications and datastores.

When looking at integration, you need to decide what information is authoritative and how the information will flow. Example: you may have an asset management system containing unique asset names and numbers for your large datacenter assets like pumps, CRACs and PDUs. You would want this information to be pushed out to the DCIM solution but changes in the asset names should only be possible in the asset management system. Your asset management system would then be considered authoritative for those information fields and information will only be pushed from the asset system to DCIM and not vice versa (flow).

Integration also means you don’t have to pull all the data from every available data source in to your DCIM solution. Select only the information and data that would really add value to your DCIM usage. Also be aware that integration is not the only way to aggregate data. Reporting tools (sometimes part of the DCIM solution) can collect data from multiple datasources and combine them in one nice report, without the need to duplicate information by pulling a copy in to the DCIM database.

The 451group model does an excellent job of displaying this need for integration showing the “integration and reporting” layer across all layers.

Using your own information model you can also plot integration and data sources.

Integration within the full datacenter stack (from facilities to IT) is also key for the future of datacenter efficiency like I mentioned in my “Where is the open datacenter facility API ?” blog.

So, to summarize:

- Look at what data you currently have, where it is stored and how that data flows across your infrastructure.

- Look at the information and functionality you need by analyzing your datacenter processes. Indentify information gaps and translate them to functional requirements.

- Look at the current tools and applications ; what applications to replace with DCIM and what applications to integrate with DCIM. What are the integration requirements and what information source is authoritative ?

- Create your own datacenter facility information model. Position all your current applications on the model. (If you have in-house IT (information) architects; have them assist you…)

Preparing your DCIM tool selection this way will save you from headaches and disappointment after the implementation.

In my next blog we will jump to the implementation phase of DCIM.

More resources:

Full credits for the DCIM model used in this blog, go to the 451Group. Taken from the excellent DCK Guide to Data Center Infrastructure Management (DCIM) at http://www.datacenterknowledge.com/archives/2012/05/22/guide-data-center-infrastructure-management-dcim/

Disclosure: between 2006 and 2012 I have selected, bought and implemented three different DCIM solutions for the companies I worked for. At that time I was also part of either the beta-pilot group for those vendors or on the Customer Advisory Board. That doesn’t make me a DCIM expert, but it generated some insight into what is sold and what actually works and gets used.

Remember when the Cloud hype kicked off and we all looked mesmerised at the Cloud Unicorn companies (like Netflix) that got great benefit from Cloud usage? We all wanted that so badly. We wanted to get out of the pain of high maintenance cost and the lack of agility. Amazon, Google, Microsoft Azure all seemed to provide that. Just by the click of a button.

Remember when the Cloud hype kicked off and we all looked mesmerised at the Cloud Unicorn companies (like Netflix) that got great benefit from Cloud usage? We all wanted that so badly. We wanted to get out of the pain of high maintenance cost and the lack of agility. Amazon, Google, Microsoft Azure all seemed to provide that. Just by the click of a button.

It’s the weekend before the holiday season and just like last year I find my self at an US airport making my way home… just in time for Christmas.

It’s the weekend before the holiday season and just like last year I find my self at an US airport making my way home… just in time for Christmas.